Financial System Efficiency – Can we predict the stock market?

How Efficient Is The Financial System?

Background

In 1948 Claude Shannon wrote a paper entitled ‘The Mathematical Theory of Communication,’ later expanding this into a book by the same name. Shannon’s work was the foundation to the stunning achievements of information theory. In many respects, Shannon’s work deserves recognition as the foundation of complexity theory as well.

The path to complexity theory was lead (in part) by a scientist named Warren Weaver, who had an early grasp of Shannon’s ideas. With the power of Shannon’s concepts, Weaver was able to divide the last few centuries of scientific inquiry into three broad groups: First, the study of simple, one or two variable problems. Second, problems of “disorganized complexity” which involve billions of variables that can only be approached with statistical and probabilistic tools. These tools apply to a wide array of artificial and natural phenomena such as: the behavior of molecules in gas, gene pool patterns, population growth rates, and even the actuarial sciences which help life insurance companies (or credit card companies) profit despite their limited knowledge of a persons condition. There was a third group that began to emerge and is still a study in its infancy: “organized complexity.” It is the group of problems that lie in the middle region between simple (static) problems, and billion variable problems (noise) of disorganized complexity. These problems still involve a large number of variables, but the size of the system is in fact a secondary characteristic, as Weaver describes:

Much more important than the mere number of variables, is the fact that these variables are all interrelated….These problems, as contrasted with the disorganized situations with which statistics can cope, show the essential feature of organization. We will therefore refer to this group of problems as those of organized complexity. [Johnston 2002]

They are sometimes described as self-organizing systems, which model animal colonies, predator-prey relationships, economic systems, weather systems (the Butterfly effect), social patterns and, mainly due of their dependence on feedback mechanisms, cybernetics. It is important to note that these systems require an understanding of the local interacting agents in order to properly study them. The problem in studying financial markets as a self-organizing system, then, is understanding the interaction between its local agents. I propose that in order to study the stock market we must explore both the market-system as well as the individual investors competing for profit. This is not a new idea; almost every field of science has attempted to explain financial market behavior: Economists, Mathematicians, Biologists, Psychologists, Neurologists are examples. In order to unify the ideas from these fields to effectively study the financial markets we will first need a powerful theory of how the market functions.

Efficient Markets

Louis Bachelier, a student of the great French mathematician Henri Poincare, first expressed the efficient market hypothesis. In 1900, his paper “The Theory of Speculation,” states that financial markets are “informationally efficient” [Malkiel 1973]. Bachelier reasons that in efficient markets, the competition among many intelligent participants leads to a situation where, at any point in time, actual prices of individual securities already reflect the effects of information based on events that have already occurred and on events which, as of now, the market expects to take place in the future. There are three forms of efficient market hypothesis (EMH): weak, semi-strong and strong.

The weak form of EMH states that all future prices cannot be predicted by analyzing prices from the past, therefore, technical analysis of stock prices are rendered ineffective at any sort of prediction (i.e. Jim Cramer). The semi-strong form of EMH states that all public information is built into a stock price, implying that fundamental analysis is also unable to produce any excess returns (proponents of semi-strong EMH will state that investors such as Warren Buffet are merely outliers). The strong form of EMH states that all information, both private and public, is built into a stock price, and implies that insider trading cannot be forecasted (i.e. Enron executives, Br-ex, Martha Stewart still took a huge gamble).

Each form of EMH has been critiqued in different ways and to different degrees since it’s conception. Today, both academics and economists unanimously reject the strong form of EMH, although debate ensues with the remaining forms. The original empirical work supporting the notion of randomness in stock prices looked at measures of short-run serial correlations between successive stock price changes [Malkiel 2003]. In general, this work supports the view that the stock market has no memory and past behavior is not useful in predicting how it will behave in the future. This is why the EMH is associated with the idea of a “random walk,” which is used to suggest that all price changes in a financial market are random departures from previous prices. The logic is based on the fact that price changes are dependent on news, and the nature of news is that it is unpredictable.

The main arguments for existence of autocorrelation (which is a sign of genuine pricing inefficiency) in the financial markets are partial price adjustments (PPA), where trades occur at prices that do not fully reflect available information: bid-ask bounce (BAB); nonsynchronous trading (NT); and time varying risk-premia (TVRP).[Cooper 1999].

Support For EMH

In a recent paper by Burton Malkiel, (the author of “A Random Walk Down Wallstreet”) he examines the many attacks on the efficient market hypothesis and the belief that stock prices are partially predictable. He explores Long Run Reversals (buying stocks with poor returns, shorting stocks with high returns in hopes of market overreaction); Seasonal and Day-of-the-Week Patterns (i.e. the January Effect: higher returns on the turn of the month and turn of the week) and prediction based on valuation parameters such as Dividends, P/E ratios and interest rates. He also explores cross-sectional patterns such as the size effect (the tendency for small cap stocks to generate larger returns as compared to large cap stocks), value stocks vs. growth stocks. He summarizes that these and many other “anomalies” are not robust or dependable during different time periods. The gains observed turn out to be extremely small or non-existent once trading costs are taken into account. Moreover, many of these patterns, even if they did exist, could self-destruct in the future, as many of them have already done (such as the January Effect). He highlights his findings with a quote from Richard Roll, an academic financial economist who is also a portfolio manager:

I have personally tried to invest money, my client’s money and my own, in every single anomaly and predictive device that academics have dreamed up…I have attempted to exploit the so-called year-end anomalies and a while variety of strategies supposedly documented by academic research. And I have yet to make a nickel on any of these supposed market inefficiencies….a true market inefficiency ought to be an exploitable opportunity. If there’s nothing investors can exploit in a systematic way, time in and time out, then it’s very hard to say that information is not being properly incorporated into stock prices [Malkiel 2003]

Most importantly, Malkiel concludes that market pricing is not always perfect (which is most obvious during the many financial bubbles of the past, such as the internet boom). He does not deny the psychological factors that influence security prices. He is skeptical that any of these documented “predictable patterns” were ever sufficiently robust as to have created a profitable investment opportunity, and after they have been discovered and publicized, they will certainly not allow investors to earn excess returns. This is an important departure point in our exploration of EMH. The conclusion is that although market inefficiencies do exist (and have been documented), once they become commonly known, they cease to exist. Thus, our stock markets are far more efficient and far less predictable than some recent academic papers would have us believe.

A recent book by John Allen Paulos entitled “A Mathematician Plays the Stock Market” attempts to debunk common market strategy “myths” such as technical analysis. He states that if the movement of stock prices is random or near-random then the tools of technical analysis are nothing more than comforting lather giving the illusion of control and the pleasure of specialized jargon. He notes that social scientists don’t seem to realize that if you search for a correlation between any two randomly selected attributes in a very large population, you will likely find some small but statistically significant association. An important point from this book is Paulos’ suspicion that markets are best modeled as nonlinear systems (using the Butterfly effect analogy, reinforcing my view that the market is one of organized complexity) that follow fractal price trajectories as opposed to random walks. He explains how mathematician Benoit Mandelbrot (the discoverer of fractals) constructed multifractal “forgeries” of stock price movement, which are indistinguishable from that of real stock price movements. In contrast, more conventional EMH assumptions about price movements (strick random-walk theorists) lead to patterns that are noticeably different from real price movements [Paulos 2003].

It is becoming clear that this dynamic precession of inefficiencies (bull and bear markets, bubbles and occasional risk adjusted opportunities…etc) can no longer be fully explained by the cold calculating EMH; a new, more flexible theory is needed to model both the market and the individual investors. Underlying the EMH is the far-reaching assumption that market participants are rational economic beings, always acting in self-interest and making optimal decisions by trading off costs and benefits weighted by statistically correct probabilities and marginal utilities. Any investor (myself included) will admit this is not the case, nor is it even possible.

Before I discuss current techniques in data mining that attempt to challenge the EMH, I will introduce a new theory which better explains many of the market anomalies that have been documented, while still maintaining the powerful assumptions from EMH that markets are very efficient. With this new theory we can better understand past debates regarding market efficiency, and critically examine the results of modern empirical tests of market efficiencies that use tools of data mining.

The Adaptive Market Hypothesis

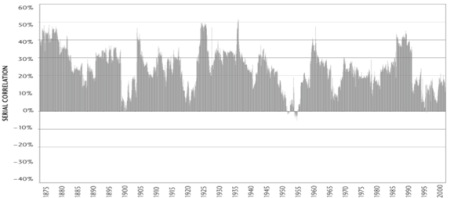

In 2005, Andrew Lo explains a new theory in his paper, “Reconciling Efficient Markets with Behavioral Finance: The Adaptive Markets Hypothesis.” Based on evolutionary principals, the Adaptive Markets Hypothesis implies that the degree of market efficiency is related to the environmental factors characterizing market ecology, such as the number of competitors in the market, the magnitude of profit opportunities available, and the adaptability of the market participants. No longer are we to view investors as rational economic automatons, we must take into account common investor irrationality (such as loss aversion, overconfidence, overreaction, mental accounting…etc) which are inconsistent with an evolutionary model of individuals adapting to a changing environment. AMH is still a qualitative framework yet it yields surprisingly concrete insights when allied with practical settings. The AMH is based on well-known principles of evolutionary biology (competition, mutation, and natural selection), arguing that the impact of these forces on financial institutions and investors determine the waxing/waning of investment products, businesses, industries and fortunes. For example: (1) the equity risk premium is not constant through time but varies according to the recent path of the stock market and demographics of investors during that path; (2) all investment products tend to experience cycles of superior and inferior performance; (3) market efficiency is not an all-or-none condition but is a characteristic that varies continuously over time and across markets; and (4) individual and institutional risk preferences are not likely to be stable over time. This fits nicely with previous issues regarding EMH such as market bubbles (which are inefficient), the waxing/waning of technical, fundamental and even seasonal patterns. A more striking example can be found by computing the rolling first-order autocorrelation of monthly returns of the S&P Composite Index from January 1871 to April 2003 [Lo 2004]

[first-order autocorrelation of monthly returns]

I find the key to AMH’s strength is the new perspective of investor motive. Since the 50’s, researchers (such as H. Simon in 1955) have suggested that individuals are hardly capable of the kind of optimization that neoclassical economics calls for in the standard theory of consumer choice. Lo argues that an evolutionary perspective provides the missing ingredient. The proper response to the question of how individuals determine the point at which their optimizing behavior is satisfactory is this: Such points are determined not analytically, but through trial and error and, of course, natural selection (based on profit and loss). In comparison, the EMH may be viewed as the frictionless ideal that would exist if there were no capital market imperfections such as transaction costs, taxes, institutional rigidities and limits to the cognitive reasoning abilities of investors.

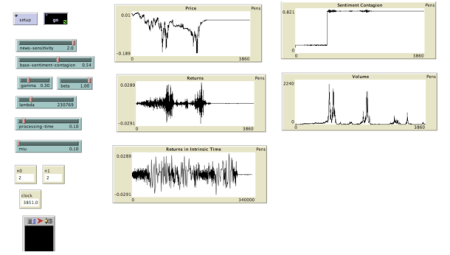

Some interesting research related to the AMH is being done with multi-agent programming environments such as NetLogo. In 2007, Carlos Pedro Gonclaves developed a paper and accompanying model entitled: An Evolutionary Quantum Game Model of Financial Market Dynamics – Theory and Evidence. The paper explores recent developments in quantum game theory, which is becoming an important field in the research of economic phenomena.

It also uses a model, which, unlike artificial financial market models, includes fluctuations in the number and sentiment of investors through time. The model is tested against actual market data, and is shown to reproduce some of the main multifractal signatures present in actual markets. This study is outside the scope of this paper, although I encourage an exploration of his paper and model, which are provided freely from the NewLogo community database [Gonclaves 2008]:

[Screenshot of the NetLogo model]

With this perspective, we can better approach the current research related to market efficiency within the fields of data mining and machine learning. I will outline some recent work and suggest an avenue for future research with market adaptively in mind.

Machine Learning & Data Mining

“October. This is one of the peculiarly dangerous months to speculate in stocks. The others are July, January, September, April, Novermber, May, March, June, December, August and February” – Mark Twain, 1894.

Investors have been fascinated by the possibility of finding systematic patterns in stock prices that, once detected, promise easy profits when exploited by simple trading rules. Consequently, there is a long tradition among investors and academics of searching through stock market data going back to the 1930’s [Sullivan 1931]. As a result, common stock market indexes such as the Dow Jones Industrial Average and the Standard&Poor’s 500 index are among the most heavily investigated data sets in the social sciences. Data mining is the process of extracting hidden patterns and rule sets from large amounts of raw data. Researchers (John Nuttall, Robert Yan and Charles Ling) from the University Of Western Ontario recently explored the application of data mining techniques to a study done by M Cooper [Cooper 1999] in their paper “Can Machine Learning Challenge the Efficient Market Hypothesis?”

Cooper’s study presented evidence of predictability in a sample he constructed and filtered (based on lagged return and lagged volume information) to uncover weekly over-reaction profits on large-cap NYSE and AMEX securities (buying past losers, selling past winners). He found that decreasing-volume stocks experience greater reversals and positive autocorrelation. He uses only stock prices and volume information in his study. His real-time simulations of the filter strategies suggest that an investor who pursues the filter strategy with relatively low transaction costs will strongly (by roughly +1.00% per week) outperform an investor who follows a buy-and-hold strategy.

Cooper’s method learns a prediction function G(x) on historical data (1964-1977, 1978-1993, 1994-2004). He chooses to study a universe that is the set of 300 stocks with the largest market cap in NYSE/AMEX. Each filter is partitioned into 1000 cells, denoted by C(j), j = 1,…,1000. Cooper calculated the average return of stocks in the cell C(j) for week t, denoted RCL(j,t). The predictor variables used are:

Predictor 1: weekly return for stock j for week t-1 (lagged one week)

Predictor 2: weekly return of stock j for week t-2 (lagged two weeks)

Predictor 3: weekly growth of stock j in volume (lagged one week)

The prediction function of G(X) is shown as follows:

Cooper uses the function G(X) to predict the weekly return of a stock (R(t)) at time t. Each week Cooper chooses the long and short stocks based on equal weighting – he picks up stocks using a set of best trained rules. The performance of Cooper’s method can be evaluated by comparing the average real return of portfolios with the average market return. Based on a low transaction cost (0.5% round trip) his long portfolio performs better than the average market return by roughly 1% weekly.

In their study Yan, Nuttel & Ling identify four extensions that could improve on Coopers work:

1. Larger stock universe

2. More powerful predictors

3. Advanced Learning method

4. Different portfolio formation method.

Their paper focuses entirely on improvements to the machine learning method.

Improvements to Machine Learning

The following is a rough outline of the machine learning improvements applied to Coopers paper, for full details of their ML method, I’d refer the technical reader to their paper for a complete outline of their method.[Ling, Nuttall, Yan 2006]

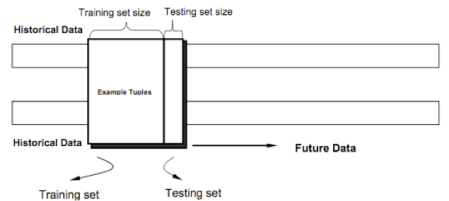

They regard Cooper’s implicit method of return prediction as a primitive application of principals of machine learning. They propose a new ML method PKR (Prototype Kernel Regression) and it’s variant APKR (Adaptive Prototype Kernel Regression). The current framework shows the prediction of stock returns depends on two factors: a predictor set and a general prediction function. The key difference is how the function G(X,t) is found. The machine learning solution is to learn the G(X,t) form historical data. During the training phase, historical data (training data) is used to learn a model G(X,t) that represents the data. They reasonably assume that this model can partially predict data beyond the training (testing) data. In the testing phase, this model is applied to the testing data to evaluate performance. If performance is acceptable, the use that model to predict unknown future data.

Their model is no longer concerned with static market modeling, which consists of one training process and one testing process. In stock market data, the time dimension introduces more complexity to the modeling, so stock data is ordered dynamically by the trading date. Obviously any random re-sampling method is not appropriate due to data snooping (generally speaking, a statistical bias ceased when testing data is used as training data); cross-validation and bootstrapping are both popular methods for this reason since they do not snoop the test data. The two common methods for updating training data before the next testing period are referred to as growing window method and moving/sliding window method. The growing method allows the training set to grow in size, while the moving window stays a constant size and discards trailing samples (the earliest data).

[Moving Window Methods]

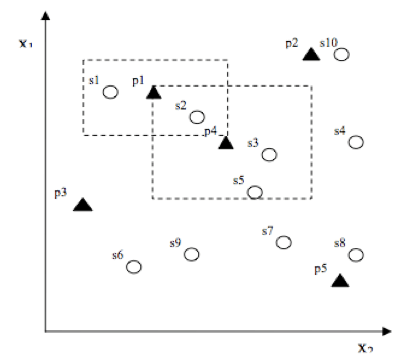

The basic form of PKR produces a mapping from all n-dimensional input samples to l n-dimensional prototypes, where l is the total number of prototypes. Their PKR method uses a grid initialization process to decide the number and positions of all prototypes. In this way PKR constructs a mapping from input samples to prototypes.

[Samples & Prototypes]

Because this structure may not be effective in situations where the number of prototypes must be adaptive (since the dispersal of samples is of varying density), they also propose an Adaptive PKR prototype initialization process to search for the “best” prototype positions and reduce the computation cost. APKR adopts a tree-structured prototypes. The entire sample space is represented by a root prototype with uniformly distributed children. The training of AKPR prototype is a bottom-up process; data is first mapped to all the leaf nodes. After the training process, the APKR prototype structure converges to a stable state with more prototypes assigned to regions of minority samples, in which we are interested. This structure is effective because it better represents noisy data and cuts down computational resources (a tree height of 3 was used).

They tested their methods as follows: For each time (t), they formed equally weighted portfolio stocks picked by PKR and APKR. All stock in the portfolios are held for one time period and then sold. They used the four most important predictor combinations based on the lessons learned from their previous study (they now use the following combinations 1, 1+3, 1+2+3 and 1+2). They repeated this process along a timeline. The resulting portfolio average returns of all time periods can be considered as the measurement. The results of these combinations for the three time periods tested are shown below:

They also compared the performances of their method based on 1+3 predictor combination, (previous week’s price & previous week’s volume) with those of a random walk model, and Cooper’s filter method. The results show an improvement over Coopers method, the average margin of PKR over Cooper’s method is 14.8%. The also showed that APKR improved the portfolio return of PKR. They also found that all combined portfolios of APKR, PKR and Coopers significantly outperform those of RW methods (clearly not conforming to EMH).

They also showed that PKR and APKR returns did not degrade with high transaction costs (1%).

Further Improvements

Nuttall, Yan and Ling proved that Cooper’s method of stock selection could be improved with more sophisticated ML approaches, PKR and APKR. They state that their work seems to seriously challenge the weak form of EMH, suggesting that machine learning methods can find weak and useful regularities in predicting stock returns. They also suggest that APKR could be improved in several directions.

Now, with the Adaptive Markets Hypothesis in mind, I intend to suggest improvements on the portfolio formation method and predictor variables used in the experiments done by Cooper and Nuttall.

Predictor Variable Improvements

The predictor variables use in Coopers experiment were simple:

Predictor 1: weekly return for stock j for week t-1 (lagged one week)

Predictor 2: weekly return of stock j for week t-2 (lagged two weeks)

Predictor 3: weekly growth of stock j in volume (lagged one week)

An important metric used in almost all financial models is volatility. Volatility refers to the standard deviation of the continuously compounded returns of a financial instrument. It can be used to quantify the risk of a financial instrument over a certain time period. I propose to include two additional volatility predictor variables. These would measure both price volatility and volume volatility, both of which are important factors for the day trader – which is the best model of this strategy.

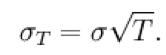

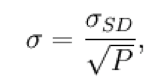

Volatility does not directly imply direction; instead it implies the magnitude of change either positive or negative. It can be expressed as:

where sigma is based on the standard deviation and the time period (P) of returns:

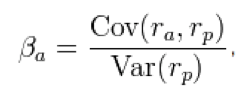

A related measure is called the beta coefficient, which describes how a stock is related to the market as a whole. It is a key parameter in the capital asset pricing model, and is estimated using regression analysis against the market index. Beta describes correlated relative volatility or financial elasticity.

A stock with a beta of 0 means that its price is independent of market movement. A positive beta follows the market, while a negative beta inversely follows the market. Below is the formula for the beta coefficient, where rp measures the rate of return of the market index and ra measures the rate of return of a specific asset. I propose to use this predictor variable for future ML experiments.

Portfolio Selection Improvements

Cooper’s experiment made portfolio selections based on equal weighting of each stock. I propose to use variable weighting method based on the Kelly criterion. The Kelly Criterion, developed by J.L. Kelly Jr. in the 50’s, is a formula used to determine the optimal size of a series of bets [Kelly 1954]. In most gambling scenarios, and some investing scenarios the Kelly strategy will do better than any essentially different strategy. The ‘kelly bet’ calculation shown below is based on a certain percent of the available bankroll.

K% = W – [(1 – W) / R]

The winning probability of a selection method can be calculated by looking at the performance of the past 10 weeks. Obtain W by dividing the number of positive weeks vs. number of negative weeks. R is the win/loss ratio and is found by dividing the average gain of positive trades by the average loss of the negative trades.

Instead of buying equal amounts of each stock in a portfolio, a percentage would be invested based on the Kelly bet. This strategy could be used along with my other proposed improvements on the ML method.

Time Frame Improvements

Investing can be broken into two broad categories: fundamental (buy & hold) and technical (day traders). The strategies outlined in this report fall under the technical category since we are attempting to find short-term patterns that can lead to profit. Technical investors generally buy and sell stock inside of a day, sometimes inside of an hour. Cooper’s method of using weeks (which the UWO study had to follow for comparison purposes) does not offer the sort of time resolution needed to capitalize on any sort of daily trends. Future work should be done to compare the same ML method based on weeks, days and hours. It is clear that these differences would lead to varied results. It is my suspicion that a daily method would lead to increased profit over a weekly method.

Future Work

In his paper entitled, ‘Efficient market hypothesis and forecasting’, Timmermann and Granger conclude that there are likely to be short-lived gains for the first users of new financial prediction methods. Once these methods become more widely used, their information may get incorporated into prices and they will cease to be successful [Timmermann 2004]. With the adaptive markets hypothesis in mind, future ML methods will give rise to many generations of financial forecasting methods. The success of these methods will depend on the originality of the model, proper application of ML techniques and the accuracy with which the model predicts the action of investors. With the preliminary improvements presented in this report and further investigation into the mechanics of the complex market system I see many opportunities for future research in this field.

REFERENCES:

Allen Paulos, John. A Mathematician Plays The Stock Market . Basic Books , 2003

Malkiel, Burton. “The Efficient Market Hypothesis and Its Critics”. Journal of Economic Perspectives 2003

Makliel, Burton. A Random Walk Down Wall Street. Princeton: W W Notron, 1973

Cooper Rev. Financ. Stud..1999; 12: 901-935

Gonçalves, Carlos Pedro . “Quantum Financial Market”. Net Logo Community Database. 2008 <http://ccl.northwestern.edu/netlogo/models/community/Quantum_Financial_Market>.

J. L. Kelly, Jr, A New Interpretation of Information Rate, Bell System Technical Journal, 35, (1956), 917–926

Johnson, Steven. Emergence: The Connected Lives of Ants, Brains, Cities, and Software. Scribner, 2002

Lo, Andrew W.. “The Adaptive Markets Hypothesis”. Journal Of Portfolio Management 2004

RJ, Yan, J Nuttal, and C Ling. Can Machine Learning Challenge the Efficient Market Hypothesis?. London: University Of Western Ontario, 2006.

Sullivan, Ryan, Allan Timmermann, and Halbert White. Dangers of data mining: The case of calendar effects in stock returns. Journal of Econometrics, 2001

Timmermann, Allan. “Efficient market hypothesis and forecasting”. International Journal of Forecasting 2004

Working, Holbrook. “Note on the Correlation of First Differences Of Averages In a Random Chain”. Econometrica 1960

Yan, Robert, John Nuttall, and Charles Ling. Application of Machine Learning to Short-Term Equity Preturn Prediction. Universit Of Western Ontario, 2006

Related

This entry was posted on April 14, 2009 at 12:20 am and is filed under Research and Projects with tags Allan Timmermann, Allen Paulos, Andrew Lo, bachelier, bid ask bounce, Burton Malkiel, Carlos Goncalves, Charles Ling, claude shannon, complexity theory, Cooper, data mining, econometrics, economics, economy, efficient market hypothesis, emergence, financial market, financial system, fractal, J kelly, january effect, John Nuttall, kelly criterion, market crash, market prediction, markets, mathematics, options, poincare, recession, self organizing, Steven Johnson, stock market, stocks. You can follow any responses to this entry through the RSS 2.0 feed. You can leave a response, or trackback from your own site.

May 14, 2009 at 2:34 am

[…] tempting uptrend will cloud a traders judgment, they will forget lessons learned from EMH (read my paper here for details on EMH) and convince themselves that good news will result in a jump in price. [EMH […]

May 30, 2010 at 2:48 pm

[…] learning whilst the complementary video is found here. Another useful article is also found here where the writer does will in linking EMH, AMH and Machine Learning algos […]

March 27, 2012 at 4:48 am

MasterK Trader…

[…]Financial System Efficiency – Can we predict the stock market? « Brit Cruise[…]…

May 1, 2013 at 7:16 pm

Overall, predictability has been limited to a small percentage of hedge fund mathematicians, e.g. Simons – who incorporate ERGMs. You could set up a fractal complexity model of analysis of Pollack’s Drip Paintings correlative to chart prices… but stock prices don’t follow random walks. Great article, research and indepth overview.

August 30, 2017 at 3:10 am

[…] since I was twelve and had made all the mistakes in the book by now. I had also spent a long time thinking about efficient markets during my University days. This lead me to the conclusion that it made sense to […]